There’s a quiet shift happening in the way technology thinks. Not in a dramatic, sci-fi kind of way—but in small, practical decisions. Where should data be processed? Should it travel miles to a server or stay right where it’s created?

It might sound technical at first, but this choice—between edge AI and cloud AI—is shaping everything from how your phone works to how cities manage traffic.

And once you start noticing it, you realize it’s everywhere.

What Do We Really Mean by Edge AI?

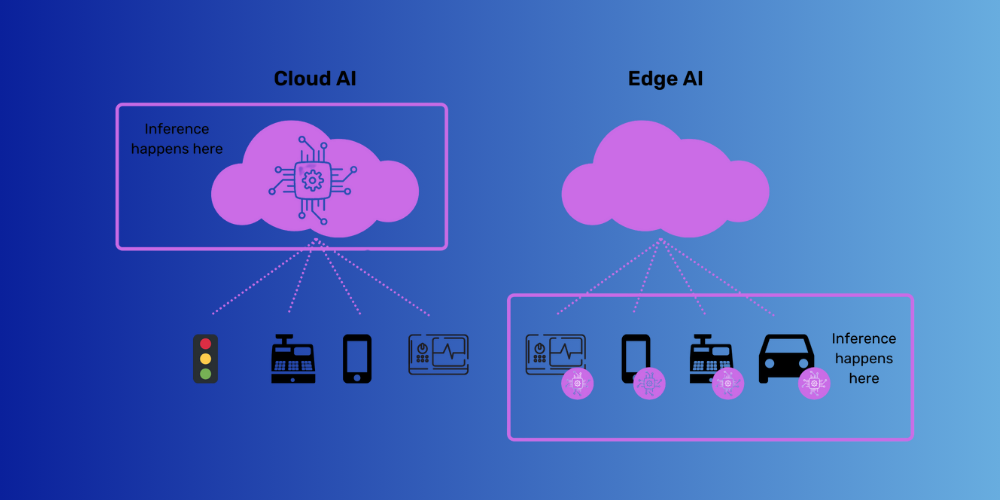

Edge AI is, in simple terms, intelligence that lives close to the source. Your smartphone recognizing your face, a smartwatch tracking your heartbeat, or even a smart camera detecting motion—these are all examples.

The processing happens on the device itself, or very close to it. No need to send data far away and wait for a response.

It’s fast. Almost instant.

And in situations where timing matters—like self-driving features or health monitoring—that speed isn’t just convenient, it’s critical.

Cloud AI: The Bigger Brain in the Background

On the other side, we have cloud AI. This is where the heavy lifting happens.

Massive data centers, powerful servers, complex models—cloud AI thrives on scale. It can analyze vast amounts of data, learn from patterns across millions of users, and deliver insights that individual devices simply can’t manage on their own.

Think of recommendation systems, large language models, or detailed analytics dashboards. These need computational power that goes beyond what a single device can handle.

So instead of processing locally, data travels to the cloud, gets processed, and the result comes back.

It’s slightly slower—but far more powerful.

Speed vs Scale: The Core Trade-Off

At the heart of this discussion is a simple trade-off.

Edge AI gives you speed and privacy.

Cloud AI gives you depth and scalability.

Neither is “better” in absolute terms. It depends on what you need.

If you’re unlocking your phone, you want instant results—edge AI wins. If you’re analyzing customer behavior across thousands of users, cloud AI makes more sense.

It’s not a competition. It’s a balance.

Real-World Situations Where Edge AI Shines

Let’s bring this down to everyday examples.

In smart homes, devices like security cameras or voice assistants often rely on edge AI for quick responses. They can detect motion, recognize commands, and react without waiting for cloud processing.

In healthcare, wearable devices monitor vitals in real time. If something unusual happens, they can alert instantly. Sending that data to the cloud first would introduce delays—and that’s not ideal in critical moments.

Even in automobiles, edge AI plays a role. Features like lane detection or collision warnings need split-second decisions.

These are situations where latency—those tiny delays—can make a big difference.

Where Cloud AI Takes the Lead

Now think about something like streaming platforms or e-commerce websites.

They analyze user behavior over time, compare it with millions of other users, and then recommend content or products. That level of analysis requires large datasets and significant processing power.

Cloud AI handles this beautifully.

It’s also essential for training AI models. The learning phase—where algorithms are trained on massive datasets—almost always happens in the cloud.

Once trained, those models can sometimes be deployed on edge devices for faster execution.

The Question That Ties It All Together

When people start exploring this space, one question naturally comes up: Edge AI vs Cloud AI – real-world use cases comparison ka actual difference kya hai?

The answer lies in context.

Edge AI is about immediacy and independence.

Cloud AI is about intelligence at scale.

Understanding when to use which is what defines effective AI implementation.

Privacy and Security Considerations

There’s another layer to this—privacy.

With edge AI, data often stays on the device. That reduces the risk of exposure during transmission. For sensitive information, this can be a big advantage.

Cloud AI, however, involves sending data to remote servers. While security measures are strong, the very act of transmitting data introduces potential vulnerabilities.

This is why many systems now use a hybrid approach—processing sensitive data locally while sending non-sensitive data to the cloud for deeper analysis.

The Rise of Hybrid Models

Interestingly, the future doesn’t seem to belong to just one of these approaches.

More and more systems are combining edge and cloud AI. Devices handle immediate tasks locally, while the cloud provides long-term insights and continuous learning.

It’s like having a quick-thinking assistant on-site and a powerful analyst working in the background.

Together, they create a more efficient system.

Challenges That Still Exist

Of course, it’s not all smooth.

Edge devices have limited processing power. They can’t handle extremely complex tasks. Cloud systems, while powerful, depend on connectivity and can introduce delays.

Balancing these limitations is where most of the innovation is happening right now.

Developers are constantly trying to optimize models—making them lighter for edge devices while keeping them effective.

A Quiet Evolution in Technology

What’s fascinating is how subtle this shift is.

Most users don’t think about where their data is processed. They just expect things to work—quickly, smoothly, reliably.

But behind the scenes, these decisions are shaping user experiences in ways we rarely notice.

Not a Rivalry, But a Partnership

In the end, edge AI and cloud AI aren’t rivals. They’re collaborators.

Each has strengths. Each has limitations. And together, they create systems that are both responsive and intelligent.

As technology continues to evolve, this partnership will likely become even more seamless—almost invisible to the end user.

And maybe that’s the goal. Not to make AI more complicated, but to make it feel effortlessly integrated into everyday life.